Research Terms

Agriculture Artificial Intelligence

This AI-based method uses plant reflectance data to identify and differentiate diseases(s) in crops, allowing for early and efficient disease detection. Early, rapid, and accurate plant disease identification is essential for implementing management strategies in a timely manner to minimize pathogen spread. However, diagnosis based on visual symptoms can be inaccurate and subjective since different diseases may present similar symptoms. The visual manifestation of symptoms may also only occur in later disease stages. Additionally, abiotic factors such as drought, salinity damage, and nutrient deficiencies can also produce symptoms similar to those caused by pathogens. Case studies for citrus canker in orange trees and laurel wilt in avocado trees highlight these drawbacks.

Sugar Belle, a variety of citrus trees infected with citrus canker, do not outwardly present symptoms of infection in the early stages. The trees often appear healthy, with the bacterial growth taking a few months to develop and present. In the case of laurel wilt in avocado trees, early-stage symptoms of infection manifest as yellowish leaves, transforming into a brownish color with necrotic and curly areas in the late stages of infection. These manifestations of symptoms are similar to those associated with nutritional deficiencies in avocado trees. Lab analysis of plant samples for disease detection is time-consuming and labor-intensive, highlighting a need for accurate and early detection of plant disease. The ability to detect plant diseases and stress factors in the early stages, even before symptoms visually appear, can help growers select and implement effective management tactics.

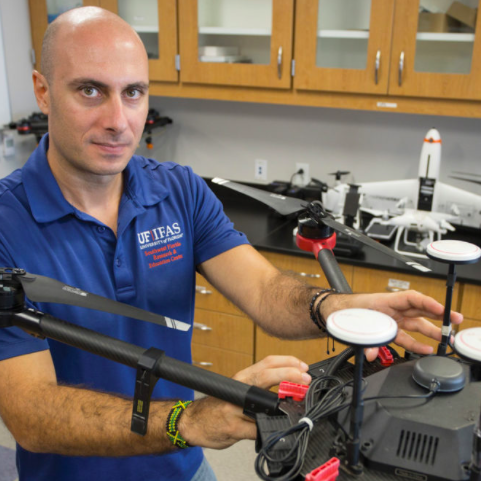

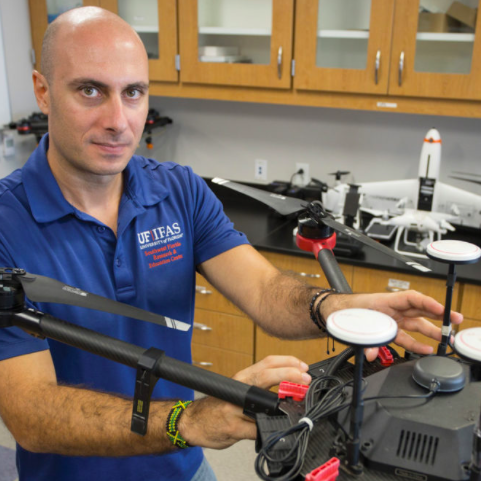

Researchers at the University of Florida developed an AI-based method for extracting plant reflectance data and developing biomarker signatures for plant identification and early disease detection. The data analytic methodology rapidly analyzes hyperspectral imaging data collected by unmanned aerial vehicles (UAV) to identify issues at an earlier stage. It provides the opportunity for interventional management to reduce losses, improving efficiency and reducing costs associated with crop management.

AI-based data analytic methodology rapidly analyzes UAV-collected hyperspectral and multispectral imaging data for accurate and efficient detection and classification of disease states for various crop plants

This AI-based data analytic method rapidly analyzes UAV-collected hyperspectral and multispectral imaging data to identify plant diseases in the early stages of infection. The Karhunen-Loeve Expansion (KLE) of the spectral reflectance data is taken from healthy and diseased plants to identify a basis for a set of functions, representing the distribution of the reflected signal energy. Through multivariate KLE analysis, a frequency reconstruction converts information into a wave function, forming a unique biomarker. These spectral identification biomarkers enable the development of a database containing healthy plant signatures and characteristic signatures for various diseases, nutritional deficiencies, and abiotic stress. This allows for less invasive classification and disease diagnostic techniques, early-disease detection, and rapid remedial action.

This AI-enabled smart sprayer system for raised plasticulture beds and row-middle weed control delivers precise herbicide spray in real-time while maintaining accuracy under extreme weather conditions. Weeds and diseased vegetation create ongoing challenges in agricultural production. By competing with crops for sunlight, nutrients, and water, ineffective weed control can reduce overall yield and crop quality. As growers increasingly adopt precision agriculture technologies to address these issues, the global smart crop scouting and smart spraying market, which is valued at $5.33 billion in 2024, is projected to reach $19.55 billion by 2034, reflecting a compound annual growth rate of 13.86% over that period . However, existing sprayer systems are optimized for flat, soil-based rows and struggle to operate in the uneven, sandy terrain of raised plasticulture. Therefore, there is a clear need for precision agriculture technologies that can reliably target weeds on raised beds and row middles while withstanding extreme weather conditions.

Researchers at the University of Florida have developed an AI-enabled smart sprayer system for raised plasticulture beds and row-middle weed control, maintaining precise, real-time herbicide applications even under the extreme heat, humidity, and wind typical of Southwest Florida. This invention integrates machine vision, machine learning, pump pressure regulation, and robotic mobility into a unified platform tailored for specialty crop production. By leveraging a detection model, the system activates nozzles only at verified weed locations, eliminating drift and overspray on uneven, sandy terrain. This directly addresses the pressure-control and terrain-adaptability gaps of existing see-and-spray solutions, opening new opportunities in precision-ag applications.

This AI-enabled smart system supports precision weed control and selective agrochemical or foliar nutrient delivery in specialty crops cultivated on raised plasticulture beds and row middles

The platform is designed to travel along raised plasticulture beds and row middles using terrain-capable tires and a structural framework configured for these environments. The system incorporates image capture devices that stream real-time imagery to an onboard computing system containing image processing, a trained object detection model, and a spray control module. The detection model, which was trained on weed types common to targeted crop systems, including broadleaf, sedge, and grass, identifies and localizes agricultural subjects and generates site-specific spray commands. These commands activate electronically controlled nozzles supplied by multiple DC pumps and proportional control valves. Integrated proportional-integral controllers regulate pressure and flow, maintaining consistent spray output while reducing drift and off-target application. The platform operates using a battery-powered configuration (48V 100A described) and includes protective structural elements that shield electrical and hydraulic components from wind, rain, heat, and moisture during field operations.

This automated vision-based pest monitoring system can rapidly and accurately detect, monitor, geolocate and count Asian citrus psyllids (ACPs) in citrus groves. ACPs are a carrier for citrus greening disease, which rapidly kills citrus plants without a known cure, causing serious threat to commercial citrus production in Florida, California and other areas. Vector management is therefore critical to slow the spread of citrus greening. The most effective way to control citrus greening is eliminating carriers like ACPs before they infect trees by spraying them with pesticides. Since estimates from 2016 place the cost of spraying at $630 per acre, cost-efficiency demands that growers spray only infected sections. However, ACP infestation detection currently relies mostly on sticky-trap captures to determine spraying needs, which is slow and costly. Sticky-trap sampling is also inaccurate because these traps assess ACP numbers in flight rather than in trees, which can lead to over- or under-spraying. Tap sampling is far more effective, but manual tap sampling requires a large group of workers to manually sample trees and is therefore labor-intensive. It also requires manually mapping the locations of samples in order to determine infested areas of an orchard.

Researchers at the University of Florida have developed an automated vision-based tap system to monitor ACPs in trees and prescribe the right amount of pesticide in the right locations. The system is designed to mount on a mobile vehicle, such as a tractor, truck, autonomous vehicle or a variable-rate pesticide applicator. This system can then detect, distinguish, count and geolocate ACPs in an orchard to provide real-time data through wireless communication to generate a prescription map and control precise pesticide delivery based on pest incidence.

Vision-based system that automates ACP detection and infestation mapping in citrus groves for targeted pest control to prevent the spread of citrus greening disease

Tap sampling is done by manually beating the lower branches of citrus trees and collecting the fallen pests. This sampling accurately detects presence of ACPs in citrus orchards and thereby allows growers to track their concentrations, but it is a labor- and time-intensive process. This vision-based system automates tap sampling in order to streamline ACP monitoring and elimination in citrus groves. A rotating drum beats the branches of the trees and catches the fallen ACPs in much the same way as a human laborer would. Once samples are taken, though, the system uses a grid of multiple cameras to generate a highly-detailed image of the concentration of sampled pests and their locations. A smart controller uses a classification algorithm and artificial intelligence to visually detect ACPs and count them. This intelligent system also automatically interfaces wirelessly with remote mapping programs, transferring geo-referenced ACP data to a cloud-based GIS database. This database not only stores the collected data, the number of ACPs, GPS coordinates, and the date and time detected, but also compiles and maps the data to direct application of pesticide based on the ACP incidence. This maximizes spray efficiency and saves considerable time and effort by automating both data collection and data-based mapping. Though this system is currently optimized for ACP monitoring, it uses machine learning to develop its classification algorithm to recognize a variety of pests and sample them as well.

SOUTHWEST FLORIDA RESEARCH AND EDUCATION CEN 2685 STATE ROAD 29 N IMMOKALEE, FL 341429733